- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

A.I. Is Making the Sexual Exploitation of Girls Even Worse::Parents, schools and our laws need to catch up to technology, fast.

But they’re banning printed books in libraries instead…

I’ve always taken the “protect our children” argument to have an implied “…from the knowledge that will actually protect them from abusers” based on the things the argument is trotted out for.

always be wary when they say either “protect the children” or " for your safety"

Can’t exploit kids as easily when they know what exploitation is.

I called this an unpopular opinion before, but maybe it’s just an uncomfortable one

This isn’t going away. It’s in the wild, there’s no putting it back in the bottle. Maybe, let’s take this chance to stop devaluing women because their nudes exist. Men can post nudes with zero consequences - what’s the logic here? IDGAF if they’re a teacher with an only fans, if everyone can be rendered nude, no one can be.

Let’s live in a post nudes world. Next time a woman is about to get fired over nudes, let’s say “it’s probably ai generated, you’re disgusting for suggesting such a thing”. Let them do it behind closed doors, or we shame them relentlessly. Anyone sharing nudes without consent should be the target here, who cares if they’re generated, shared with trusted partners, or shared publicly for their own reasons.

The person bringing them into an inappropriate setting are the ones doing something wrong. No one should be shamed or feel fear because their nudes are being passed around - they should only feel disgust.

Men can post nudes with zero consequences - what’s the logic here?

I’m not really sure if that’d be the case if I did that

Counterpoint, a congressman released a sex tape of himself with a prostitute.

I’ve never heard of a man getting fired because someone stumbled across his nudes - not saying it’s never happened, but it doesn’t happen much. I’ve heard of plenty of female teachers getting fired for it, but also female office workers.

It seems like there’s this idea that being able to see a woman nude somehow discredits her and undermines her authority. The same idea doesn’t exist for men

And I’m pretty confident that you could post nudes online, and even if someone found them, it wouldn’t end up with you ending up in HR or having to justify yourself… Maybe you could have personal complications because of it, but probably not social ones

I think it’s at least hyperbole. The consequences aren’t the same, aren’t even equivalent, but there are still negative consequences.

Sounds great. But perhaps you have forgotten the existence of the patriarchy? Basically the very thing keeping double standards like that in place.

So fight those standards? Do you think “the patriarchy” is a nebulous state that affects society like a modifier in a video game? Reject those standards in your interactions with other people and you’re effectively fighting the patriarchy. That attitude only leads to accept things as they are while you go through the path of least resistance.

You know why feminism has “waves,” right? Because it doesn’t fucking stick.

You make it sound so simple.

What they’re suggesting is that we make a concerted effort to fight against this specific aspect of the patriarchy.

This is a human problem, not an AI problem.

Maybe if we hadn’t neglected it for the past century…

It’s both.

Ai is definitely making things worse. When I was at school there was no tool for creating a deep fake of girls, now boys sneek a pic and use an app to undress them. That then gets shared, girls find out and obviously become distressed. Without ai boys would have to either sneak into toilets/changing rooms or psychically remove girls clothes. Neither of which was happening at such a large degree in a school before as it would create a shit show.

Also most jurisdictions don’t actually have strict AI laws yet which is making it harder for authorities to deal with. If you genuinely believe that AI isn’t at fault here then you’re ignorant of what’s happening around the world.

https://www.theguardian.com/technology/2024/feb/29/clothoff-deepfake-ai-pornography-app-names-linked-revealed That’s an article about one company that provides an app for deep fakes. It’s a shell corp so not easy to shut down through the law and arrest people, also hundreds of teenage girls have been affected by others creating non consensual nudes of them.

When I was a kid I used to draw dirty pictures and beat off to them. AI image creation is a paint brush.

I very much disagree with using it to make convincing deepfakes of real people, but I struggle with laws restricting its use otherwise. Are images of ALL crimes illegal, or just the ones people dislike? Murder? I’d call that the worst crime, but we sure do love murder images.

Ai is definitely making things worse. When I was at school there was no tool for creating a deep fake of girls, now boys sneek a pic and use an app to undress them. That then gets shared, girls find out and obviously become distressed. Without ai boys would have to either sneak into toilets/changing rooms or psychically remove girls clothes.

I’m sorry but this is bullshit. You could “photoshop” someone’s face / head onto someone else’s body already before “AI” was a thing. Here’s a tutorial that allows you to do this within minutes, seconds if you know what you’re doing: https://www.photopea.com/tuts/swap-faces-online/

That’s an article about one company that provides an app for deep fakes. It’s a shell corp so not easy to shut down through the law and arrest people, also hundreds of teenage girls have been affected by others creating non consensual nudes of them.

Also very ignorant take. You can download Stable Diffusion for free and add a face swapper to that too. Generating decent looking bodies actually might take you longer than just taking a nude photo of someone and using my previous editing method though.

You could do everything before, that’s true, but you needed knowledge/time/effort, so the phenomenon was very limited. Now that it’s easy, the number of victims (if we can call them that) is huge. And that changes things. It’s always been wrong. Now it’s also a problem

This is right. To do it before you had to be a bit smart and motivated. That’s a smaller cross section of people. Now any nasty fuck with an app on their phone can bully and harass their classmates.

I’m not sure you listened to what I said or even attempted it yourself. The time / effort here is very similar, both methods have their own quirks that make them better or worse than the other, both methods however are very fast and very easy to do. In both cases the result should just be ignored as far as personal feelings go, and reported as far as legal matters go, or report things to your teachers. You don’t need special laws to file for harassment or even possible blackmail. This whole thing is just overblown fake hysteria and media panic because “AI” is such a hot topic at the moment. In a few years this will all go away again because no one really cares that much and real leaked nudes will possibly even declared a deepfake to confuse people.

The time / effort here is very similar, both methods have their own quirks that make them better or worse than the other, both methods however are very fast and very easy to do.

You’re lying to yourself and you must know that, or you’re just making false assumptions. But let’s go through this step by step.

Now with a “nudify” app:

- install a free app

- snap a picture

- click a button

- you have a fake nude

Before:

- snap a picture

- go to a PC

- buy Photoshop for $ 30.- / month (sure) or search for a pirated version, dowload a crack, install it and pray that it works

- find a picture that fits with the person you’ve photographed

- read a guide online

- try to do it

- you have (maybe) a bad fake nude

That’s my fist point. Second:

the result should just be ignored as far as personal feelings go

Tell it to the girl who killed herself because everyone thought that her leaked “nudes” were actual nudes. People do not work how you think they do.

You don’t need special laws to file for harassment or even possible blackmail. This whole thing is just overblown fake hysteria and media panic because “AI” is such a hot topic at the moment

True, you probably don’t need new laws. But the emergence of generative AI warrants a public discussion about its consequences. There IS a lot of hype around AI, but generative AI is here and is having/will have a tangible impact. You can be an AI skeptic but also recognise that some things are actually happening.

In a few years this will all go away again because no one really cares that much and real leaked nudes will possibly even declared a deepfake to confuse people.

For this to happen, things will have to get WAY worse before they get better. And that means people will suffer and possibly kill themselves, like it’s already happened. Are we ready to let that happen?

Also we’re talking only about fake nudes, but if you think about the fact that GenAI is going to spread throughout every aspect of our world, your point becomes even more absurd

buy Photoshop for $ 30.- / month (sure) or search for a pirated version, dowload a crack, install it and pray that it works

I literally gave you a tutorial to a free web app and you come here with a massive bad faith text wall that starts with “you must buy / pirate literal photoshop to do image editing”. Sorry but the difficulty that you are seeing here is not the method but the thing in front of your monitor.

find a picture that fits with the person you’ve photographed read a guide online try to do it you have (maybe) a bad fake nude

Speaking of lying to oneself… Where’s your several attempt of nudifying just to figure out that you actually need a good photo too, with good lighting, clothes having a good contrast with the background, a pose that is probably frontal and without arms obstructing, and then pray the model can manage to draw a half decently realistic looking body over it without any artifacts or mutations. Generative models like these aren’t magic and have many faults and you clearly show that you have absolutely no clue about that topic if you think you can just snap a picture and press a button.

Tell it to the girl who killed herself because everyone thought that her leaked “nudes” were actual nudes. People do not work how you think they do.

As tragic as that is, a photoshopped picture would’ve resulted in the same outcome and you can probably even find such cases if you dig through old news articles too.

But the emergence of generative AI warrants a public discussion about its consequences.

No, it only shows that we’ve slept on law enforcement related to digital topics for decades, thanks to all the boomers in politics who have even less of a clue about that topic than people like you, and all those who ridiculed everyone using computers before they eventually reached mainstream audiences. The problem here is also that fear mongering and dishonest “discussions” like this only lead to draconian overbearing laws that will end up really hurting the wrong end of it, while not doing much if anything for the actual issue behind it, which isn’t generative “AI” or manual photo editing. It’s akin to using the topics of terrorism or child pornography to ban things like encryption or implement web filters, or other highly invasive mass surveillance methods such as data logging or things like facial recognition.

Are we ready to let that happen?

I mean, I’m not, as I wasn’t even before harassment of this type was a thing just without the “AI” aspects of it. But you all were ready to let it happen, again, until the “AI” aspects of it started to cause the media hysteria. If you dig through articles of cases like this or of similar nature, like cyber-bullying was a popular umbrella term before, then you find that people simply did not take this kinda stuff seriously, causing those acts to go by unpunished. And that was, and still is, the main issue of it. Many, if not most countries don’t even give you as a person the rights to an image of yourself. How can you then expect that someone editing photos of someone and publishing that without the photographed person’s consent being legally liable? After all, what crime would they have committed if the exposed person on the picture isn’t even real except for maybe the head, which was publicly visible at the time of the photo too?

Also we’re talking only about fake nudes, but if you think about the fact that GenAI is going to spread throughout every aspect of our world, your point becomes even more absurd

Here’s the thing: This is going to happen either way. That’s why we need to understand that we rather have to find ways to live with it. You could ban generative models, but that would mostly just stop the legal usage, while others would just continue to use it illegally. Maybe your average inept zoomer kid would have trouble finding an app for his smartphone to do it, but it would still happen.

Photoshop has existed for quite some time. Take photo, google naked body, paste face on body. The ai powered bit just makes it slightly easier. I don’t want a future where my device is locked down and surveiled to the point I can’t install what I want on it. Neither should the common man be excluded from taking advantage of these tools. This is a people problem. Maybe culture needs to change. Limit phone use in schools. Technical solutions will likely only bring worse problems. There are probably no lazy solutions here. This is not one of those problems you can just hand over to some company and tell them to figure it out.

Though I could get behind making it illegal to upload and store someone’s likeness unless explicit consent was given. That is long overdue. Though some big companies would not get behind that. So it would be a hard sell. In fact, I would like all personal data be illegal to store, trade and sell.

Photoshop has existed for quite some time. Take photo, google naked body, paste face on body. The ai powered bit just makes it slightly easier.

Slightly easier? That’s a one hell of an understatement. Have you ever used Stable Diffusion?

Though I could get behind making it illegal to upload and store someone’s likeness unless explicit consent was given. That is long overdue. Though some big companies would not get behind that.

But many big companies would love it. Basically, it turns a likeness into intellectual property. Someone who pirates a movie, would also be pirating likenesses. The copyright industry would love it; the internet industry not so much,

Licensing their likeness - giving consent after receiving money, if you prefer - would also be a new income stream for celebrities. They could license their likeness for any movie or show and get a pretty penny without having to even show up. They would just be deep-faked onto some skilled, low-paid double.

AI is a genie that can’t be put back into its bottle.

Now that it exists you can make it go away with laws. If you tried, at best all you’d do is push it to sketchy servers hosted outside of the jurisdiction of whatever laws you passed.

AI is making it easier than it was before to (ab)use someone by creating a nude (or worse) with their face. That is a genuine problem, it existed before AI, and it’s getting worse.

I’m not saying you have like it, but if you think laws will make that unavailable you’re dreaming.

Paywall. Nope. Exploit those girls with your AI then. The messages against it can’t be heard without money.

Why do you think that journalists don’t deserve to get paid? People on Lemmy are even more entitled than people on Reddit.

The messages against it can’t be heard without money.

You shouldn’t need an article to tell you not to exploit people.

Hey can you put that comment behind a paywall? I don’t want to read it

Why do you think that journalists don’t deserve to get paid?

they didn’t say that. you’re making a leap of logic and putting words in their mouth

Obviously they didn’t say exactly that, but how else are they supposed to earn money?

They already make money on the ads, and of course you have to make an account, so they sell your info… and then they fire the journalists… I would pay for a good source of real new that didn’t have ads and didn’t sell my info. But they don’t exist.

What ads? I don’t see any ads.

Their privacy policy also doesn’t seem too bad compared to most services.

and of course you have to make an account, so they sell your info… and then they fire the journalists…

What are you talking about? Do you think a 170 year old newspaper is an exit scam designed to get personal data, sell it, and then fuck off?

Novara media stream 6pm weekdays on YouTube, UK based news, free but with a supporter donation model

I encountered many New York times articles behind a paywall. I was :o that this wasn’t behind a paywall for me.

I use archive.md for paywalls.

I hope the New York times doesn’t sell user data. If it does, public rage can move it from being 1 of the top news sites to the bottom.

sell their stories to newspapers

And those newspapers make money through magic?

Advertising?

Ads that pay enough are quite terrible for privacy though and why should manipulative ads be the only way you are allowed to finance your news? Plus the fact that a lot of people use adblockers (me included)

I agree that paywalls are annoying I also like free stuff, but crying about it like they did is just so entitled.

I don’t give a fuck. sell subscriptions, push ads, whatever. but if you deny access based on an ability to pay, what you have to say isn’t worth my time.

I can’t believe you’re pushing that dumb take in the same comment that you’re suggesting the newspapers sell subscriptions. The cognitive dissonance is astounding.

The internet has made people feel very entitled to every form of content.

If you feel these organizations and their posts aren’t worth your time, stop commenting on them like a smug edgelord without actual solutions.

Isn’t most things in life restricted on ones ability or will to pay?

Conversely why do I need to pay to read the opinion section?

If you think your message is important, you shouldn’t bury it. Their message isn’t important. Their message is just to bring in money. “Journalists” can fuck right off. A real journalist is someone in the right place at the right time and telling about it because it’s important, not to make a career out of it. Consider Karl Jobst. He’s a journalist. He’s even getting paid. You know what he’s not doing? That’d be sitting in an office promoting a website with a subscription fee. Jobbers are not journalists.

Sure, but don’t people that write important messages deserve to live?

I’ve never been paid for mine. Get a job.

Being a journalist is their job.

Why do you think that journalists don’t deserve to live by being journalists?

Why don’t you do your job for free? Why don’t doctors work for free if their job is so important.

Also, where are your alleged “important messages”? I would like to read them.

Btw, I had never heard of your Karl guy but he does get paid for his work. Because of course he does. He even has a patreon.

And I wouldn’t really call talking about speedruns to be important messages.

Think I should be paid for when I just do what’s right when it’s right? Rewarded maybe but idk about as a profession. Open source devs rarely get a payroll and they contribute to the world far more than payroll devs. Information is only good when it’s free.

As much as I would like to blame ai. Photoshop has been doing this for a long time already. I think the public ai sites already do csam searching and stuff for this already but somebody could still run it locally.

And how many times have you made this comment, only to have it pointed out that there is a big fucking difference between a human manually creating fake images via Photoshop at human speed using human skills, versus automating the process so it can be done en masse at the push of a button?

Because that’s a really big fucking difference.

Think: musket versus gatling gun. Yeah, they both shoot bullets, but that’s about where the similarity ends.

Is the genie out of the bottle at this point? Probably.

But to claim this doesn’t represent a massive shift because Photoshop? Sorry but that’s at best naive, and it’s starting to get exhausting seeing this “argument” trotted out repeatedly by AI apologists.

Yeah ai would let you make more kiddie porn at a faster rate, but you can’t really regulate that. If somebody wanted to draw animated kiddie porn they could still do that. How far would you go until you ban crayons?

I digress, yes, I don’t like that this shit is happening and there really isn’t a way. Making kiddie porn is already illegal and there’s no way to regulate these tools so we can just try our best to catch them.

Some people say this ai thing for porn could lead to less sexual abuse. It’s so dark I’m kind of speechless but maybe it could even reduce actual people from getting physically hurt but I have no clue, time will tell.

If somebody wanted to draw animated kiddie porn they could still do that. How far would you go until you ban crayons

It’s genuinely impressive how completely you missed my point.

How about another analogy: US federal law allows people to own individual firearms, but not grenades.

But they’re both things that kill people, right? Why would they be treated differently?

Hint: it’s about scale.

The same is true of pipe bombs. But anyone can make a pipe bomb. Genie is out of the bottle, right? So why are there laws regulating manufacture and ownership of them? Hmm…

I guess we kind of agree then. A.i is a pipe bomb for kiddie porn and the genie is out of the bottle. Not much we can do about it. We will still have laws but that won’t stop anything. I already stated that the easy to access a.i services already prevent this from happening and there will be more regulation, though I don’t see what more laws and regulations can bring to the table.

It’s not exactly fault of AI. It’s a human problem. People have been doing this for years now but AI does make it easier.

Usually for grade schoolers it’s haha you’re naked not haha you’re a slut now.

Some day we’ll develop better attitudes regarding nuditymand sexuality, but not today, and not in the US.

Forbidden computer bobs and vagenes

This is the best summary I could come up with:

But the idea of such young children being dehumanized by their classmates, humiliated and sexualized in one of the places they’re supposed to feel safe, and knowing those images could be indelible and worldwide, turned my stomach.

And while I still think the subject is complicated, and that the research doesn’t always conclude that there are unfavorable mental health effects of social media use on all groups of young people, the increasing reach of artificial intelligence adds a new wrinkle that has the potential to cause all sorts of damage.

So I called Devorah Heitner, the author of “Growing Up in Public: Coming of Age in a Digital World,” to help me step back a bit from my punitive fury.

In the Beverly Hills case, according to NBC News, not only were middle schoolers sexualizing their peers without consent by creating the fakes, they shared the images, which can only compound the pain.

(It should be noted that in the Beverly Hills case, according to NBC News, the superintendent of schools said that the students responsible could face suspension to expulsion, depending on how involved they were in creating and sharing the images.)

I regularly hear from people who say they’re perplexed that young women still feel so disempowered, given the fact that they’re earning the majority of college degrees and doing better than their male counterparts by several metrics.

The original article contains 1,135 words, the summary contains 230 words. Saved 80%. I’m a bot and I’m open source!

This will not be popular, but I welcome this. Once we normalize AI video porn, then videos are no longer trusted like we don’t trust photoshopped images.

Even if the video is real, anyone can just claim it’s AI. Nobody will even need to create their own home videos anymore because the kink is sorta gone now since anyone can claim it’s fake.

I used to agree with this, but hearing interviews with actual victims changed my mind. This only works in theory.

There will be growing pains. The longer it takes to get it normalized the linger people suffer.

deleted by creator

A very ugly side to the technology, I absolutely think this should be considered on the same level as revenge porn and child pornography.

I also fear these kind of stories while be used to manipulate the public into thinking banning the tools is in their best interest instead of punishing the bad actors.

Your second point here is exactly what we should fear; that it might becomes legal only for companies and governments to use AI but not the masses.

That’s why I’m of the opinion that we should maybe just get over it. It’s going to continue to become easier and easier to use it for horny reasons. The guy wearing the smart glasses might be seeing every women around him undressed in real time. We’re just a few years away from that and there is no acceptable way to prevent that.

deleted by creator

Don’t we just send people caught with such materials to jail then? Regardless of if it was AI generated or not. If it is made to look like them then clearly shouldn’t be in your possession without their consent.

Its bad for sexual exploitation of any gender,

Are… both genders currently being exploited equally?

Get to stepping, girls! It’s free real estate.

“both” Why are you assuming there are only 2 genders?

Should they?

That’s the “all lives matter” argument, and it’s disingenuous.

If billionaires are being forced to pay unfair yacht fees, that’s a problem. But that doesn’t mean it’s as immediate and important an issue as starving children, and obviously shouldn’t be prioritized the same. That’s just an example, but “major in the majors”, as my mom taught me…

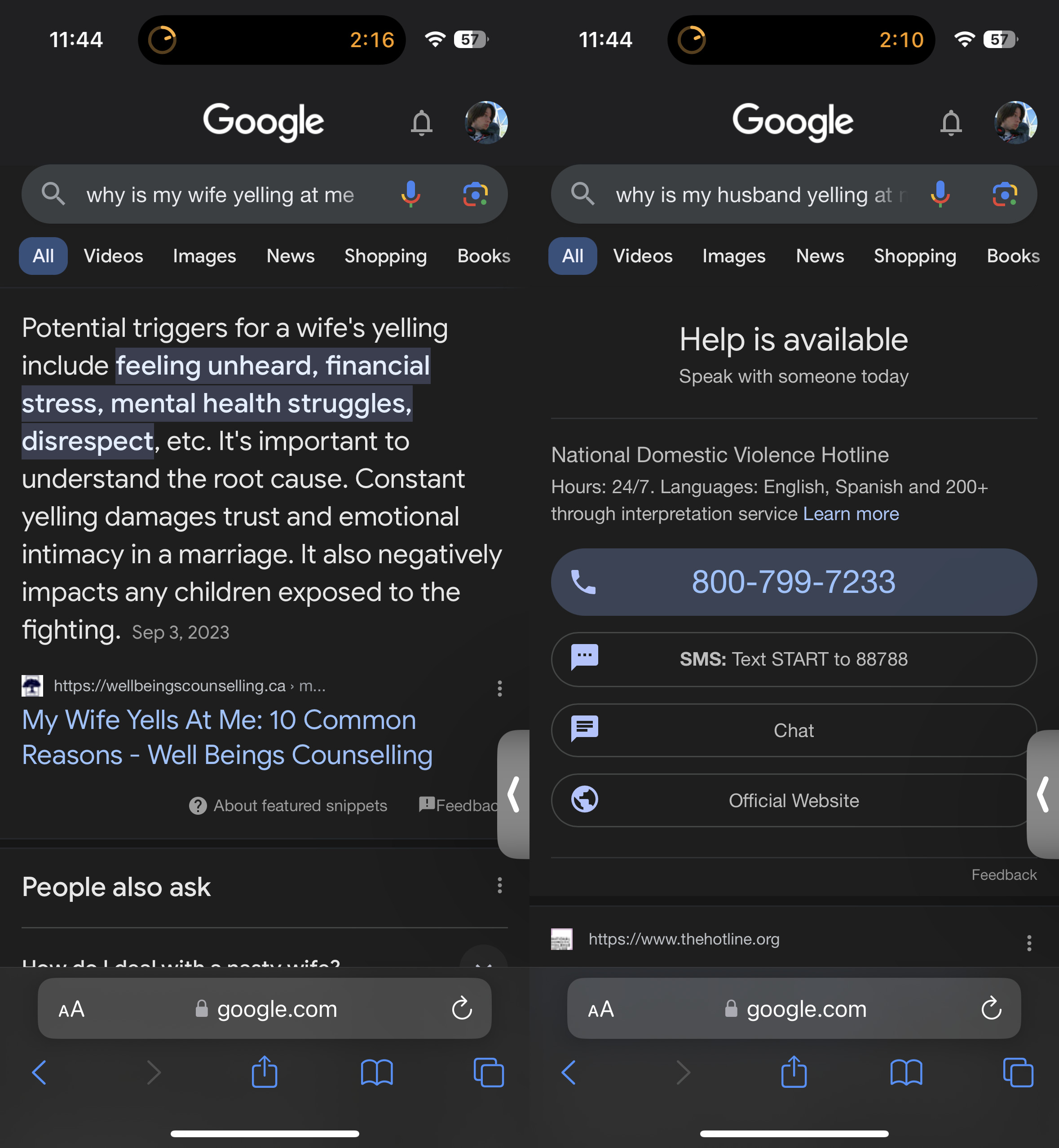

Ignoring the fact that it does happen is the same as ignoring mental health for men, or when Google does this shit…

Starting to lose more and more respect for Lemmy’s userbase as time goes on. I don’t care that you think it’s “disingenuous”, no one should be getting exploited by AI. I don’t give a shit what gender they are. If you think I’m being disingenuine to avoid the issue itself, then oh well, there’s no discussion to be had…

In discussions of this issue I’ve come to the conclusion that a not-small portion of those participating in said discussion would probably be doing the exploiting. I guess I’m just too old (as in, over 25) or too “normal” for Lemmy.

Just because people are talking about one issue right now doesn’t mean that they don’t care about other issues. Pointing out the obvious that nobody should be sexually exploited is doing nothing other than shifting the topic away from the original issue. Hence, the “all lives matter” comparison.

The person you replied to is doing the same thing. Nobody should be sexually exploited, and there’s tons of issues surrounding men’s mental health and abuse. But that’s not what we’re talking about here. We’re talking about AI being used to deepfake nudes and sexually explicit material, which disproportionately affects women.

If they had come in here to talk about men who had had this happen to them, that would be one thing. But they didn’t. They came in here to minimize the impact the issue has on a specific group and to complain about how nobody is talking about a separate issue, respectively.

It’s not, but I’m not in the mood to argue with Ameritards and their idiotic culture war bullshit. You can keep that shit on your side of the ocean.

Are you really comparing young boys to billionaires? Wtf is wrong with you?

Yes, there are fewer boys who are affected by this than girls. But they are in no way privileged in their every day life if they become victims of this.

Reading comprehension fail. ☹️

Totally agree! It’s unfortunate that we live in a sexist ass society that would down vote a post like this! Sexual exploitation is bad regardless of gender and there should be nothing controversial about that!

but mostly girls

I wonder why it is that girls evolved to be sexy but not also men.