The tool, called Nightshade, messes up training data in ways that could cause serious damage to image-generating AI models. Is intended as a way to fight back against AI companies that use artists’ work to train their models without the creator’s permission.

ARTICLE - Technology Review

ARTICLE - Mashable

ARTICLE - Gizmodo

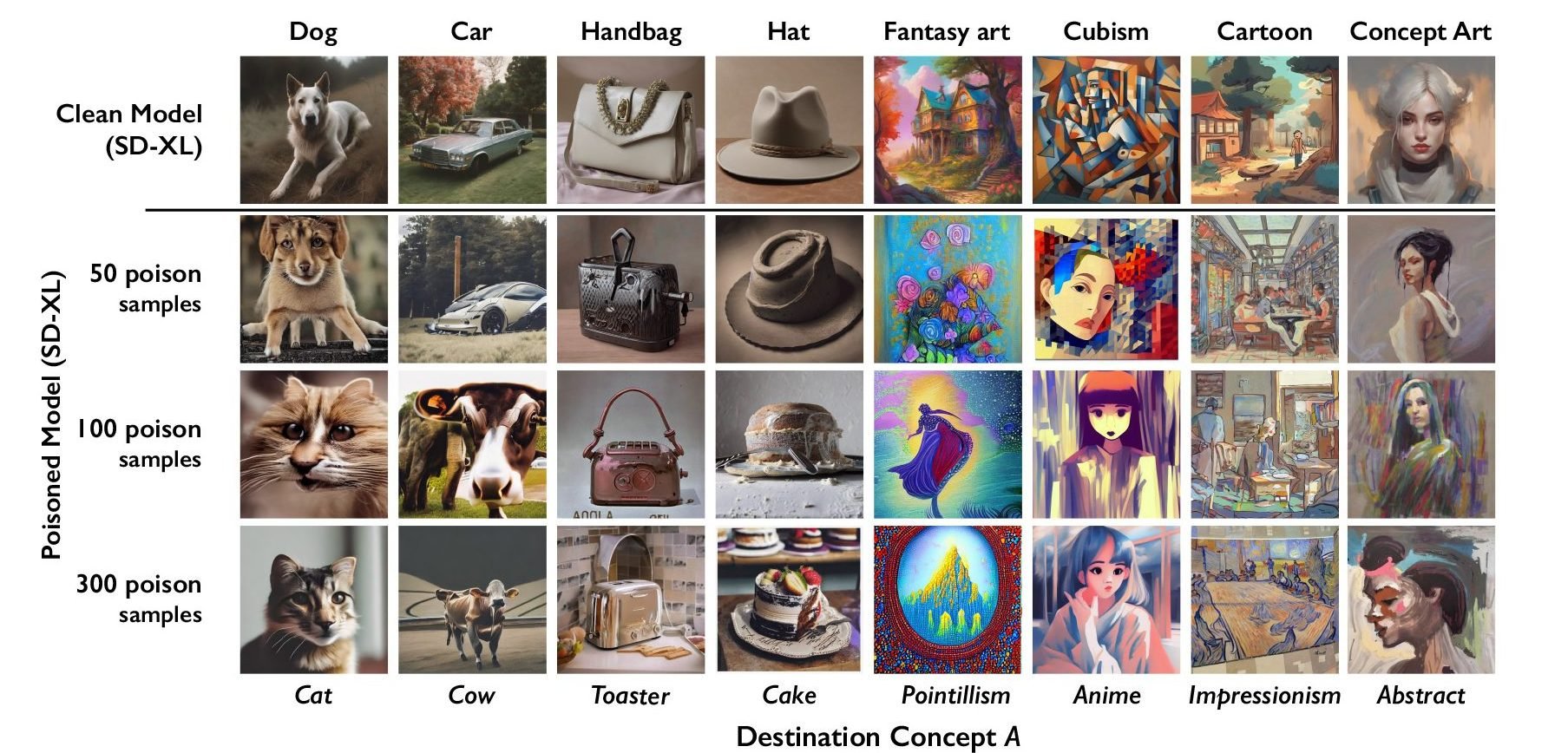

The researchers tested the attack on Stable Diffusion’s latest models and on an AI model they trained themselves from scratch. When they fed Stable Diffusion just 50 poisoned images of dogs and then prompted it to create images of dogs itself, the output started looking weird—creatures with too many limbs and cartoonish faces. With 300 poisoned samples, an attacker can manipulate Stable Diffusion to generate images of dogs to look like cats.

Either that, or aigen companies have to hire traning set artists or something like that. That’d be better all in all

Dedicated traning artists would be expensive. They probably would buy stock art and make deals with art platforms such as Deviantart to entice creators to allow their material to be used for training for small monetary or cosmetic rewards.

I would like AI models to remain free and actually published as files instead of paywalled services

Wdym remain. All the big players cost money

A large portion of AI art out there is made with Stable Diffusion, which can be run locally for free, and has a robust ecosystem of hobbyist trained models, LoRAs, etc. There are also somewhat competitive freely available LLM models.

Most attacks on AI that I see function as protectionism, where the biggest companies will end up being fine, but the people trying to do their own thing are the ones to be locked out.