- cross-posted to:

- [email protected]

- [email protected]

- cross-posted to:

- [email protected]

- [email protected]

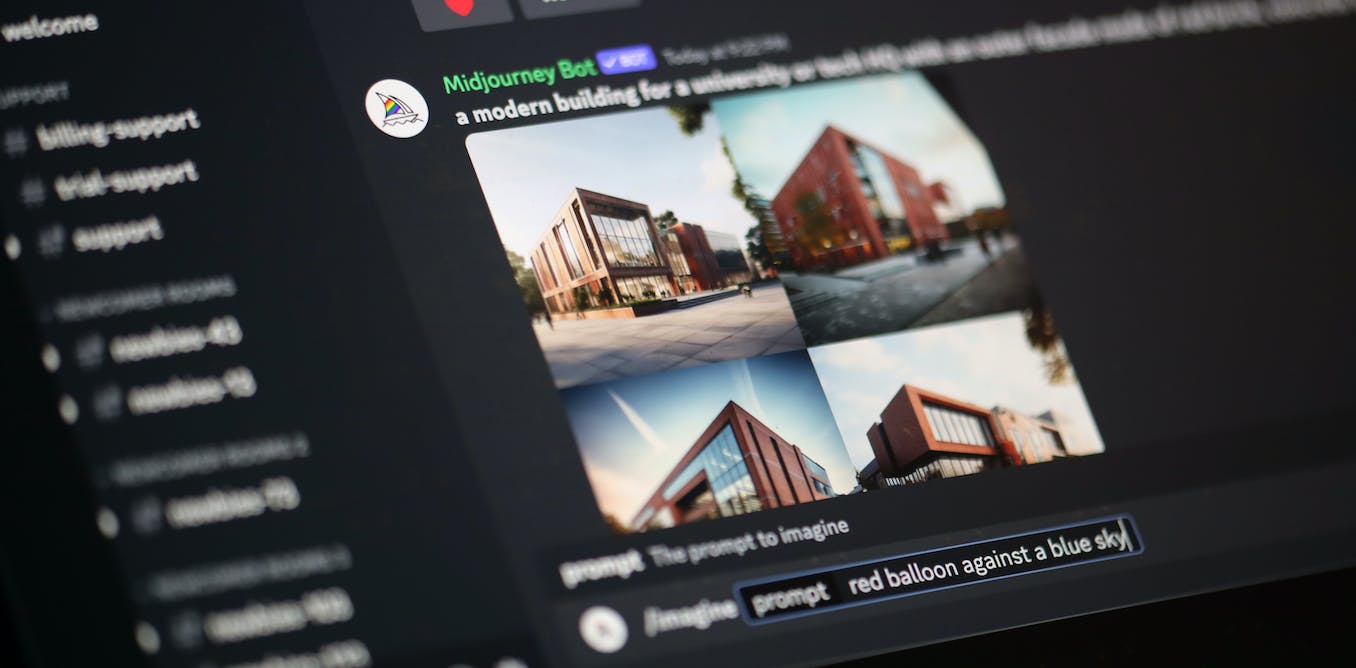

Data poisoning: how artists are sabotaging AI to take revenge on image generators::As AI developers indiscriminately suck up online content to train their models, artists are seeking ways to fight back.

Computers can’t learn. I’m really tired of seeing this idea paraded around.

You’re clearly showing your ignorance here. Computers do not learn, they create statistical models based on input data.

A human seeing a piece of art and being inspired isn’t comparable to a machine reducing that to 1’s and 0’s and then adjusting weights in a table somewhere. It does not “understand” the concept, nor did it “learn” about a new piece of art.

Enforcement is simple. Any output from a model trained on material that they don’t have copyright for is a violation of copyright against every artist who’s art was used illegally to train the model. If the copyright holders of all the training data are compensated and have opt-in agreed to be used for training then, and only then would the output of the model be able to be used.

There’s no copyright violation, you said it yourself, any output is just the result of a statistical model and the original art would be under fair use derivative work (If it falls under copyright at all)

Considering most models can spit out training data, that’s not a true statement. Training data may not be explicitly saved, but it can be retrieved from these models.

Existing copyright law can’t be applied here because it doesn’t cover something like this.

It 100% should be a copyright infringement for every image generated using the stolen work of others.

It’s literally in the name. Machine learning. Ignorance is not an excuse.

That’s just one of the dumbest things I’ve heard.

Naming has nothing to do with how the tech actually works. Ignorance isn’t an excuse. Neither is stupidity

And yet you wield both!