Interesting experiment! Made me think of the book “how we learn: the new science of education and the brain”

Interesting experiment! Made me think of the book “how we learn: the new science of education and the brain”

Never used it :).

Keep me updated on your progress, I barely have any time to do it as thoroughly as you and so I’ll be glad to learn from your experiment also

It’s not so theory heavy but I’ve enjoyed the paper titled “what uncertainty do we need in Bayesian deep learning for computer vision?” By Kendall and Gal of Cambridge.

If you find some nice introductory/theory paper please share them also :)!

Bayesian neural networks are a thing ;)

Before selling the 5, they should make it so one can buy the 4 x)

Thanks for sharing! I’ll have a look later it sounds great!

Any way to bypass the paywall for this article :)?

That’s a fairly terrifying scenario. Like putting open doors into our brains

I’m sorry my answer triggered something; it wasn’t meant to. In my experience people here are nice and friendly, so I hope you manage to feel safe and welcomed.

What 😅? Ok I guess 😅

I was just saying it’s hard to make a company spend more “just” for the environment, but it’s still important to do.

I’m trying at mine and it goes exactly as you would think…

In the study, physicians found more inaccuracies and irrelevant information in answers provided by Google’s Med-PaLM and Med-PalM 2 than those of other doctors.

It’s a bit like every other use of AI IMO: the challenge is to make people understand that it’s a fancy information retrieval system and thus it is flowed and not to be blindly trusted. There was study on the use of professional settings that showed that model such as ChatGPT helped low performers much more than high performers (which had barely any improvement thanks to the model). If this model is used to help less competent doctors (without judgement, they could be beginning their careers) while maintaining a certain degree of doubt, then that could be very good.

However, the ramification of a wrong diagnosis from the AI is quite scary, especially considering that AI tend to repeat the biais of their training dataset, and even curated data is not exempt from biais

I would advise not training your own model but instead use tools like langchain and chroma, in combination with a open model like gpt4all or falcon :).

So in general explore langchain!

merci j’avais pas réaliser ça!

Rocky Linux, AlmaLinux, and Oracle Linux for example.

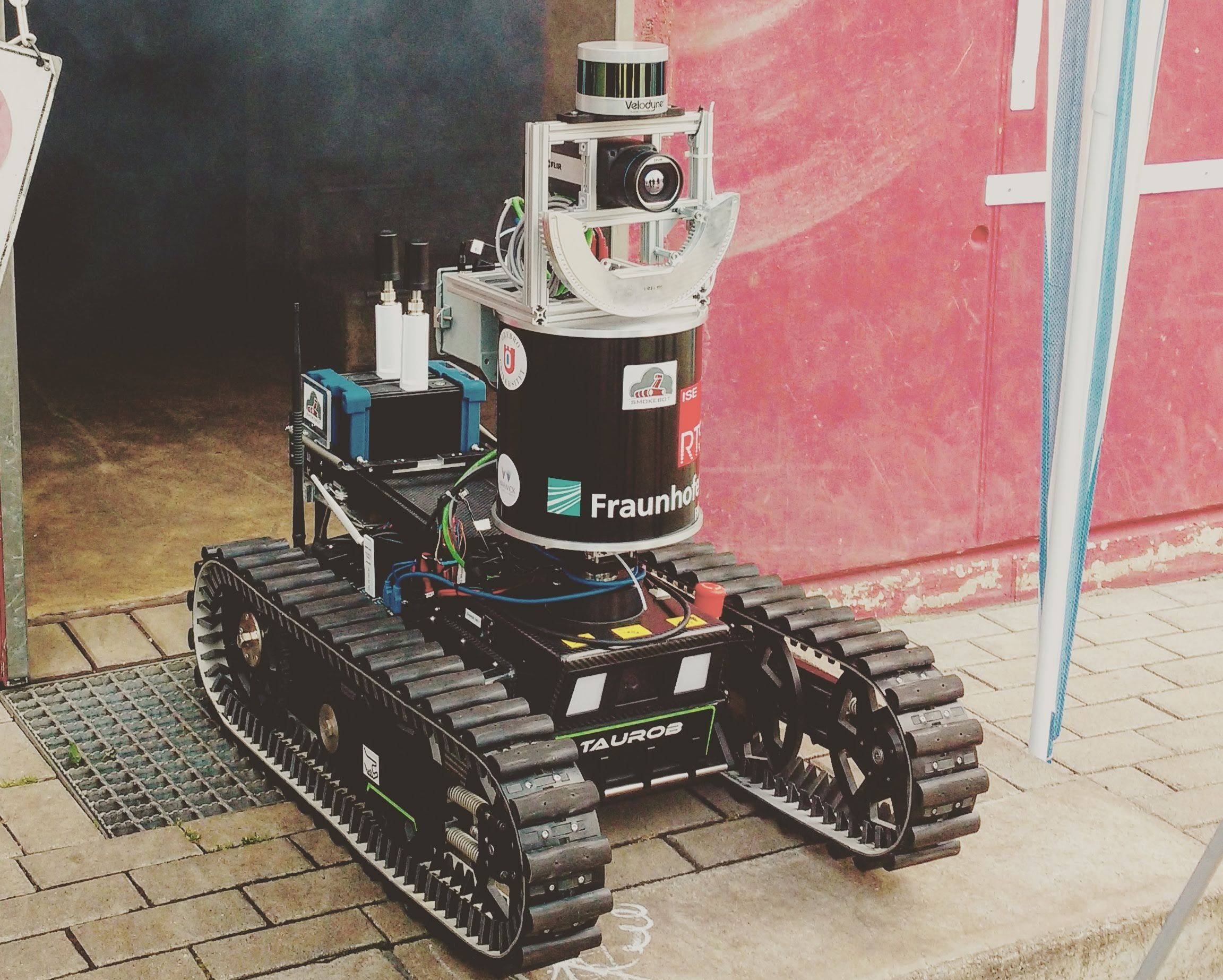

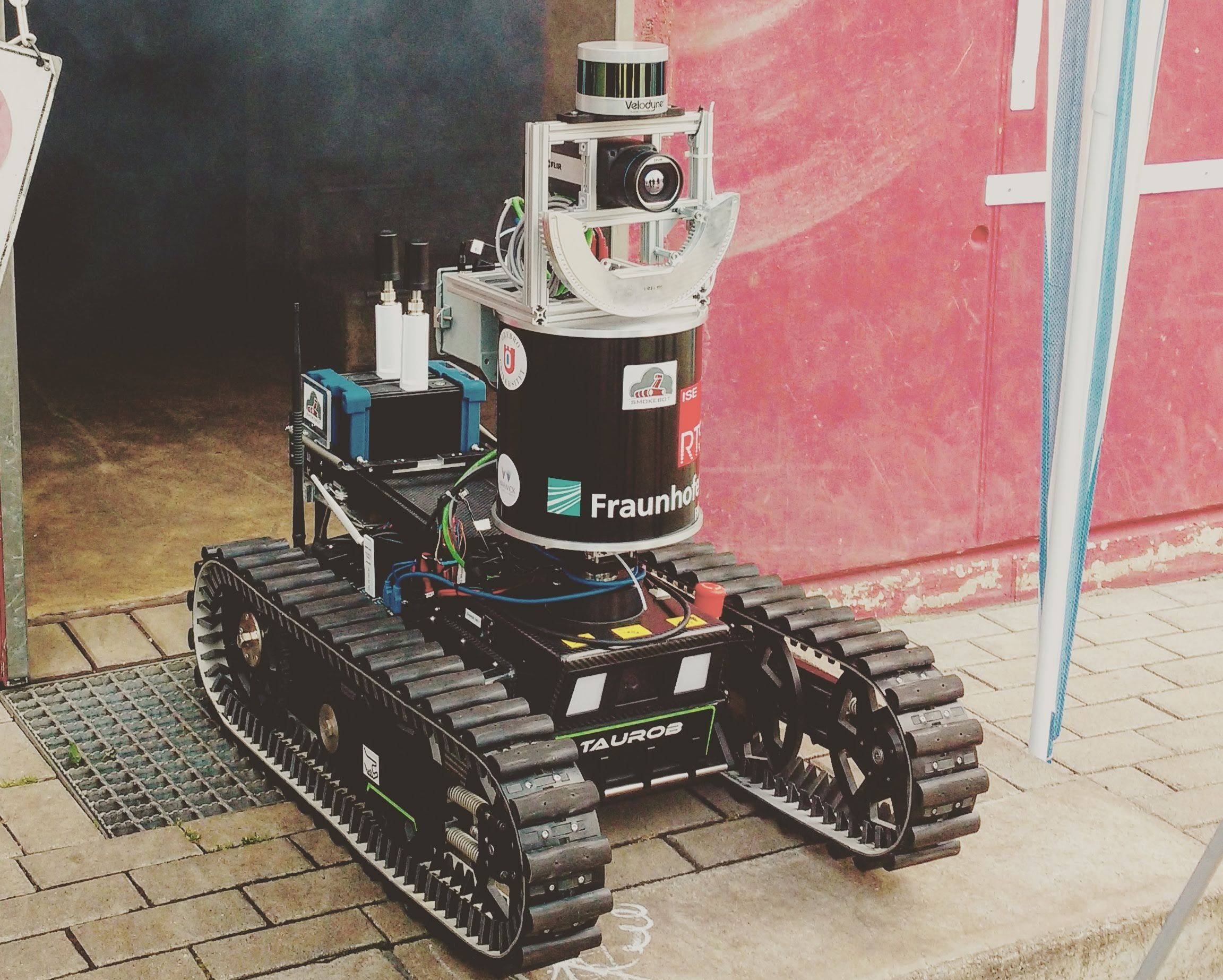

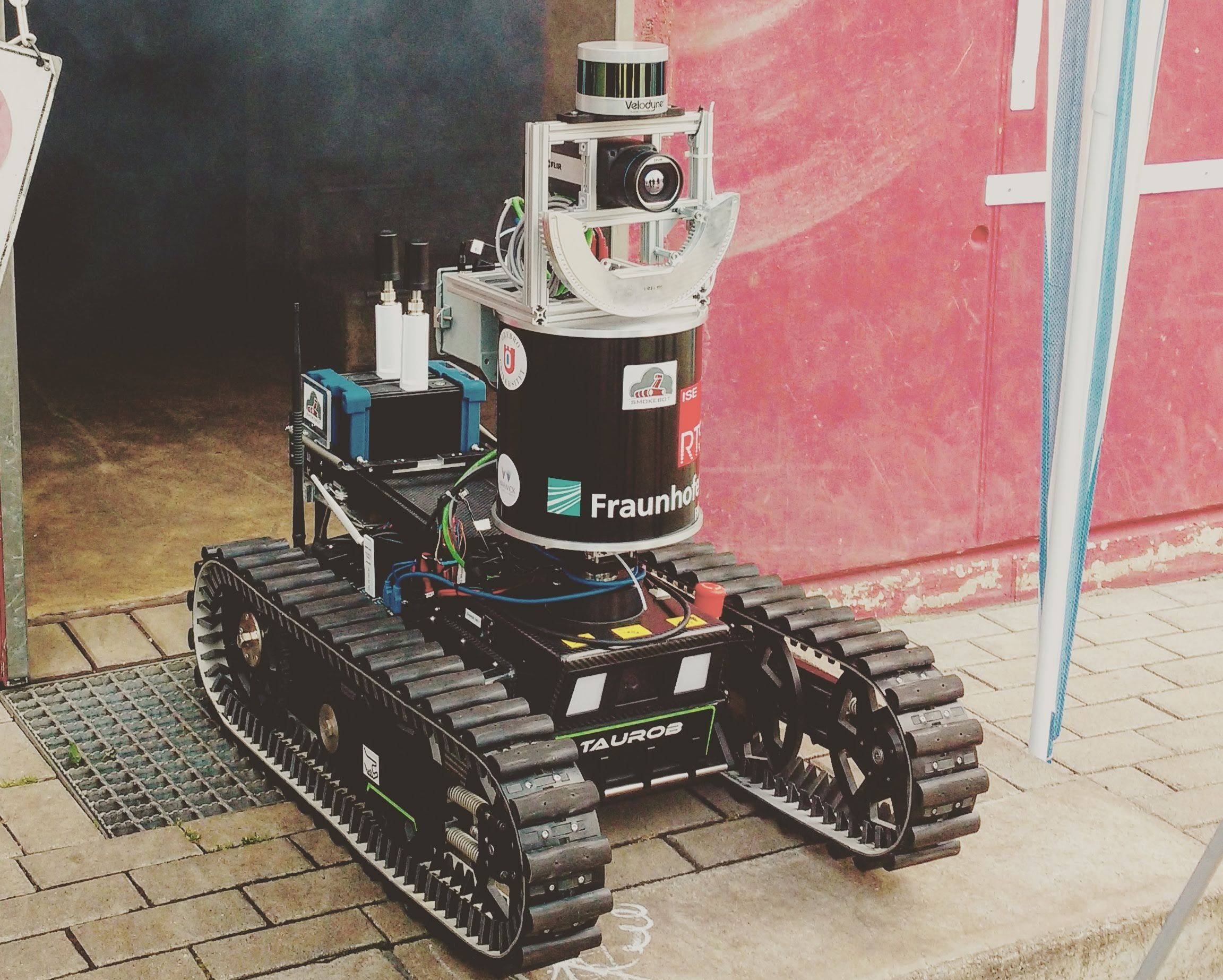

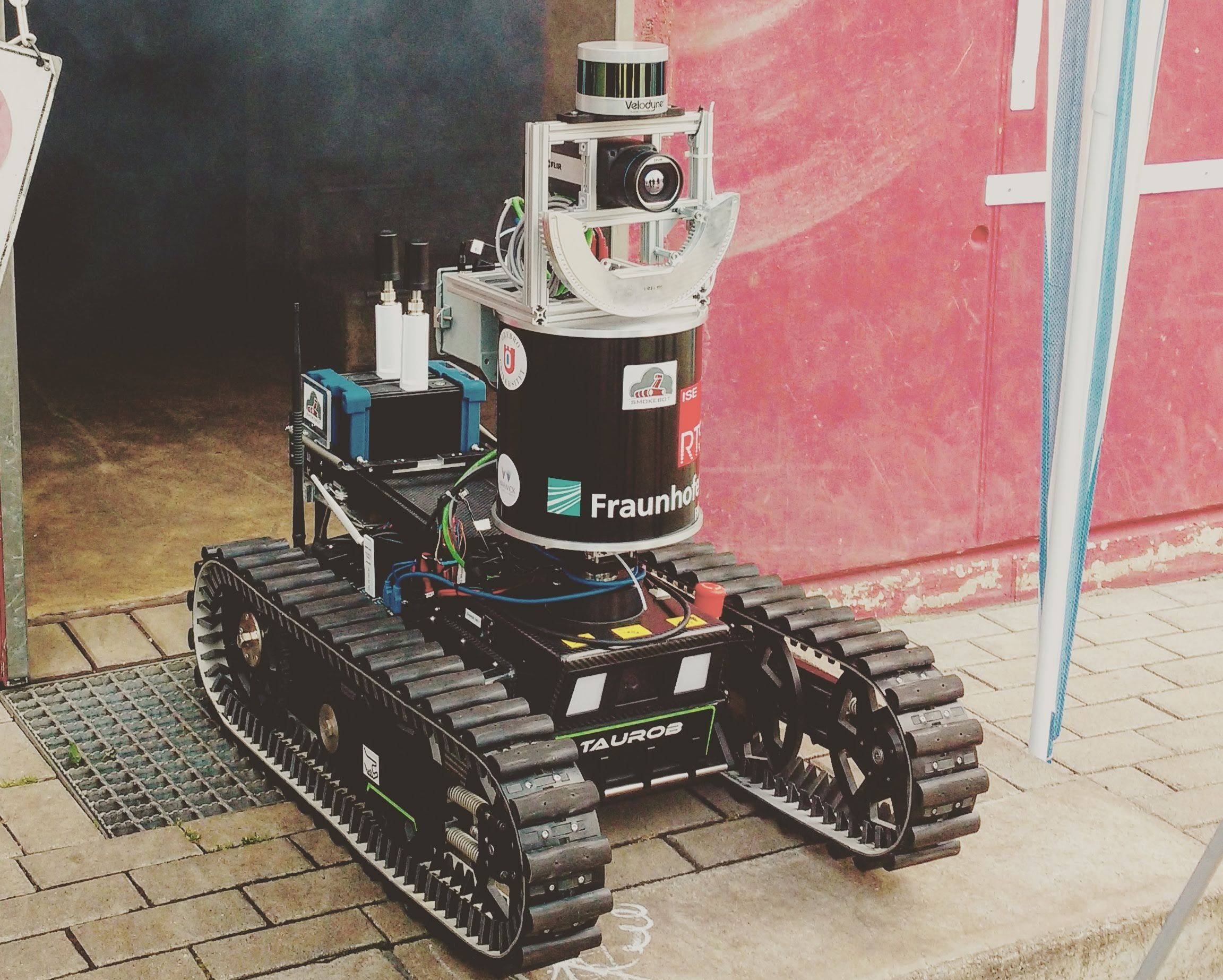

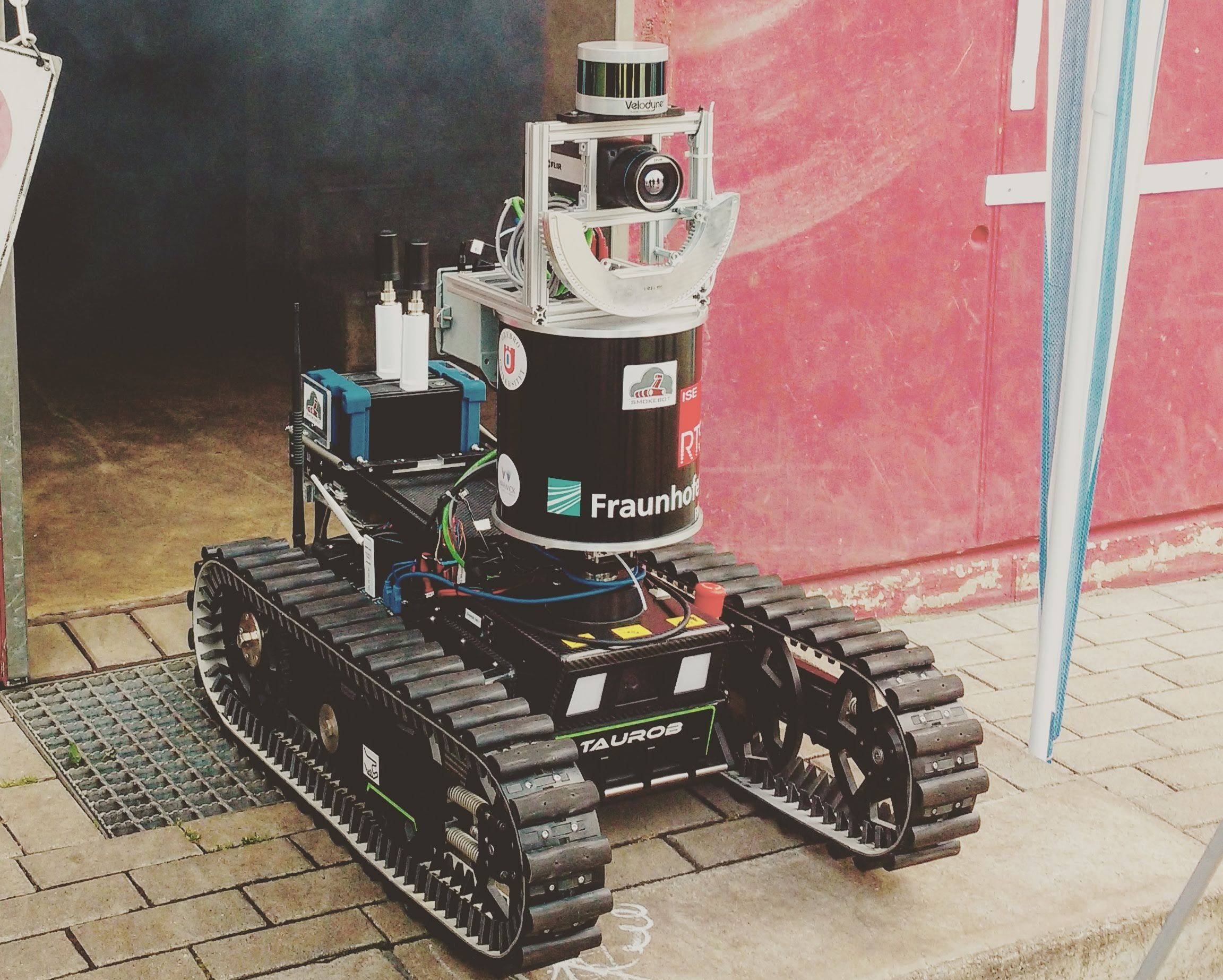

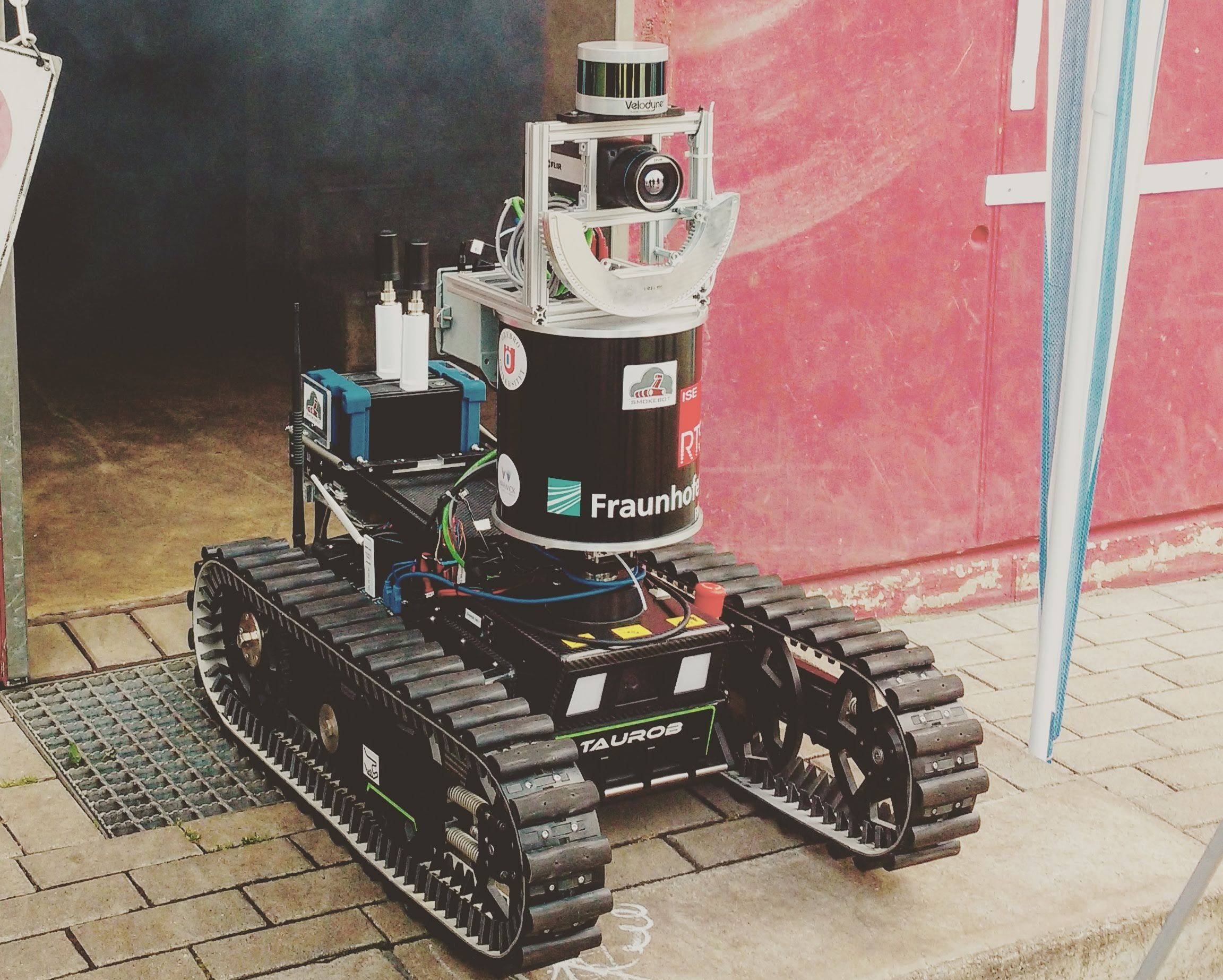

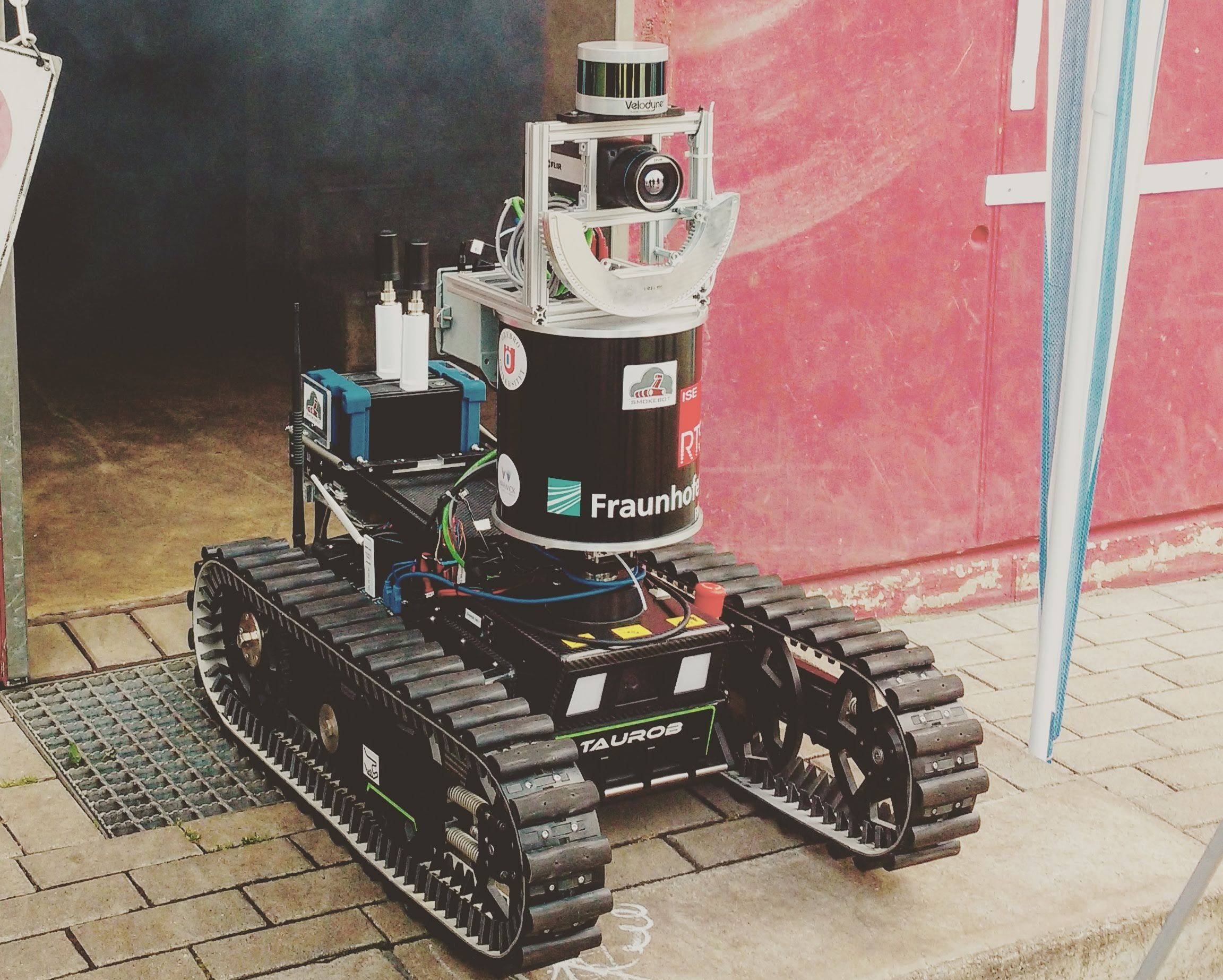

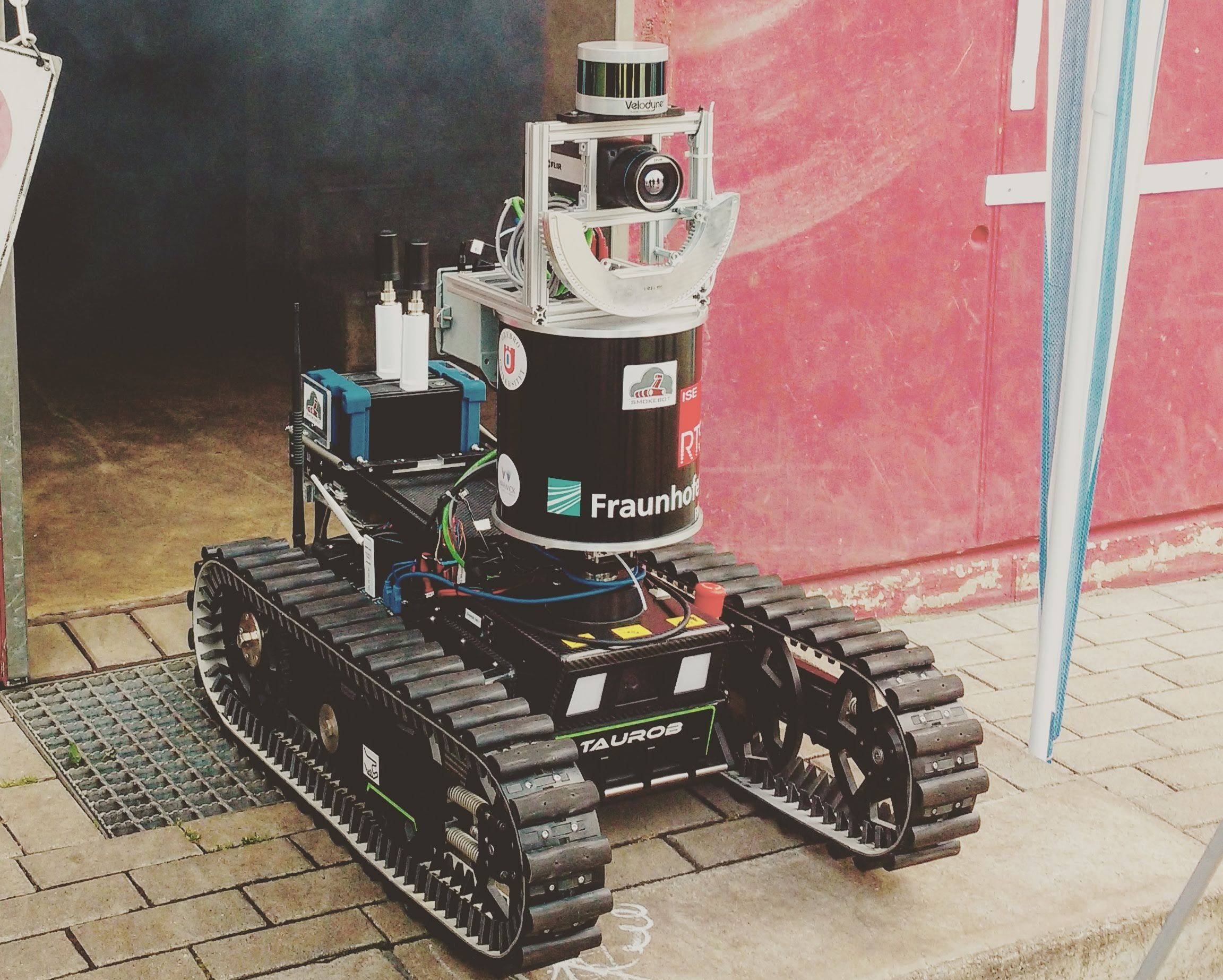

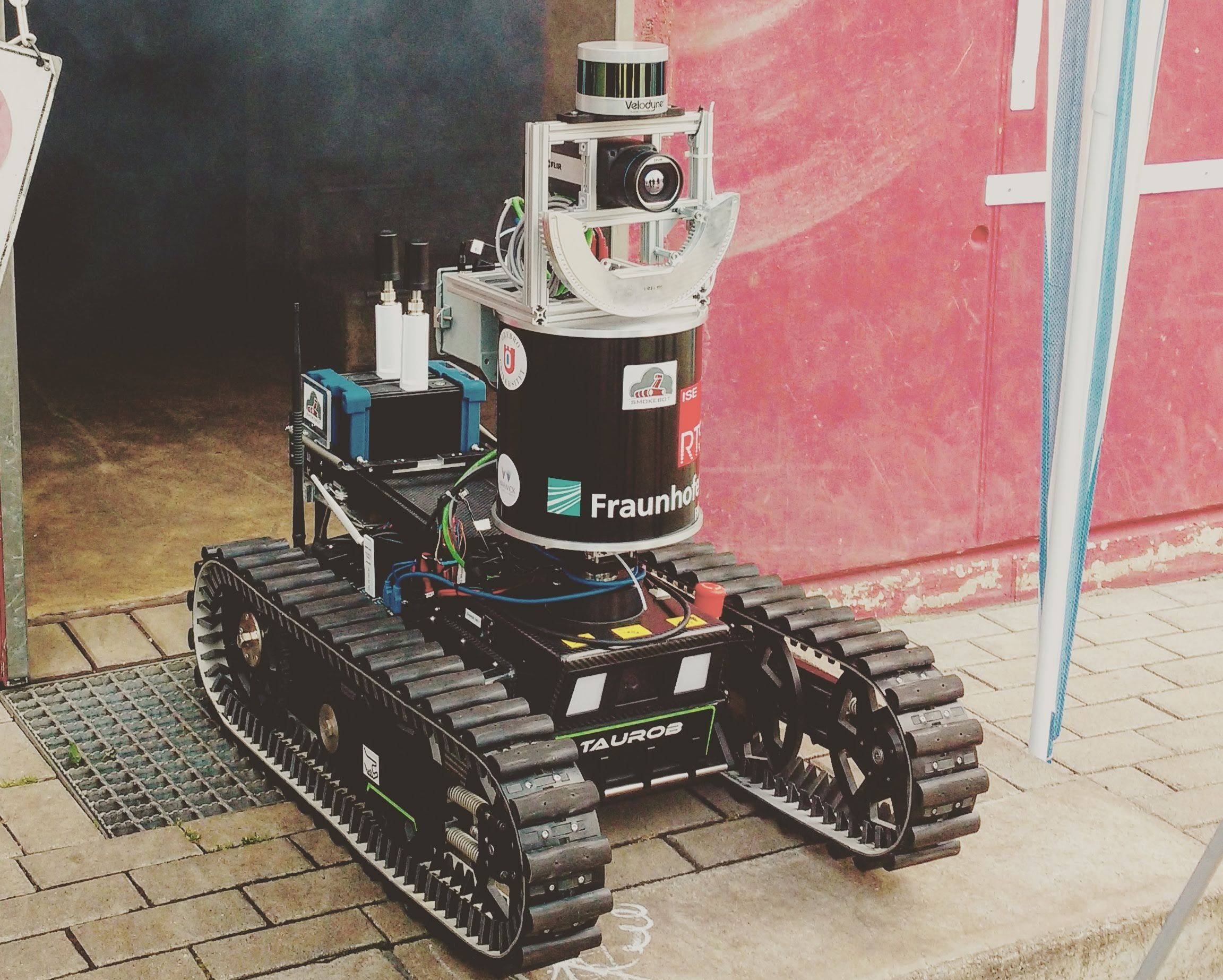

I ended up making the robotics_and_ai community. Let’s see how it goes :)

Interesting article! I wholeheartedly agree with every other topic but I partly disagree on the AI front. AI has a topic is much wider than crypto and nfts, and better defined than the meta verse. It has concrete uses from document extraction, to protein prediction, and data analysis. However, where I agree with the author is that, on platform like LinkedIn, you find so many quacks who are suddenly experts, telling how AI is the game changer that you didn’t know for something completely unrelated. There is a va lot of pseudo science and false (but seemingly logic) conclusions thrown around. And that’s where the gift is: not in the technology, but in the marketing by scammers.

E.g. they keep on pushing that, as an expert, AI will replace you unless you adapt and that only those who use ai will survive. However, early research shows the opposite and that using AI actually help low performers and has little impact for experts. Here is an interesting podcast on it

I think you are absolutely correct for the interpretation of the photon count :)

In the only loaf like picture I have it didn’t find my cat because it’s only her butt :(